Using LLMs in Production

Learn how large language models are deployed in real-world production environments, including system architecture, retrieval augmented generation (RAG), prompt engineering, evaluation, monitoring, and scaling AI-powered applications.

LLMs in Production

Real Engineering Examples of Using LLMs in Production

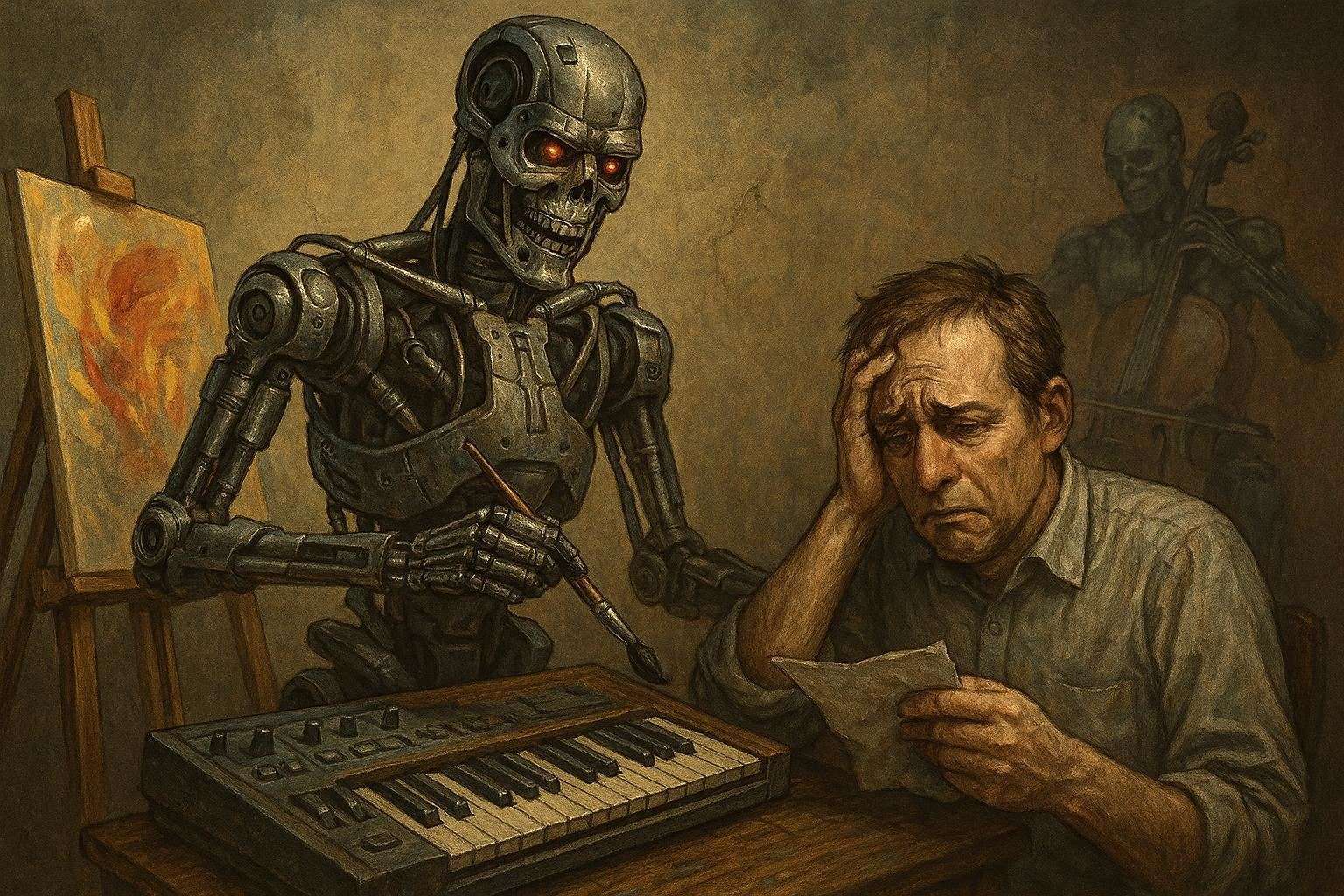

Large Language Models (LLMs) are often demonstrated through creative tasks like writing poems or generating images. However, the real impact appears when they are integrated into production systems.

In many companies, LLMs now power automation pipelines, internal tools, customer support systems, and developer workflows.

This article explores practical engineering examples of how LLMs are used in real-world production environments.

1. Customer Support Ticket Triage

One of the most common production use cases is automatically routing support tickets.

Companies receive thousands of emails or chat requests every day. Traditionally, humans had to read each ticket and assign it to the correct department.

LLMs can automate this step.

Example

Input:

Customer message:

"My monitor keeps turning off after a few minutes. I tried changing the cable but it still fails."

LLM Output:

Category: Hardware Issue

Department: Technical Support

Priority: Medium

Production Architecture

graph TD

A[User Message] --> B[API Gateway]

B --> C[Preprocessing Service]

C --> D[LLM Classification]

D --> E[Routing System]

E --> F[Support Team]

Benefits

- Faster response times

- Reduced manual triage

- Consistent ticket categorization

2. Summarizing Customer Conversations

Support teams often deal with long chat transcripts.

Instead of reading the entire conversation, managers can get a short summary generated by an LLM.

Example

Input:

Customer: My monitor won't turn on

Agent: Can you check the HDMI cable?

Customer: The image appears for a second then disappears

Agent: It may be a faulty cable

LLM Summary:

Customer reported monitor display issue.

Diagnosis indicates faulty HDMI cable.

Replacement recommended.

Engineering Implementation

graph TD

A[Chat Logs] --> B[Message Storage]

B --> C[Batch Processing Job]

C --> D[LLM Summarization]

D --> E[CRM Dashboard]

This helps:

- Support managers analyze trends

- Reduce time spent reviewing tickets

- Improve quality control

3. Internal Knowledge Assistants

Another powerful use case is internal company chatbots.

Engineers, HR teams, and operations staff often need quick answers from internal documentation.

Instead of searching through internal pages or PDFs, employees can ask a question.

Example

Query:

What is our policy for production deployments?

Response:

Production deployments require:

1. Two code approvals

2. Passing CI checks

3. Change ticket in Jira

4. Deployment during allowed windows

Architecture (Retrieval-Augmented Generation)

Let:

where (D) represents the document set.

The system retrieves:

Then the LLM generates a response conditioned on (q) and (d^*).

graph TD

A[User Question] --> B[Embedding Model]

B --> C[Vector Database]

C --> D[Relevant Documents]

D --> E[LLM Generates Answer]

Common tools used in production:

- Pinecone

- Weaviate

- Elasticsearch

- OpenSearch

4. Code Generation and Developer Productivity

LLMs are heavily used to improve developer productivity.

Examples include:

- generating boilerplate code

- writing test cases

- explaining legacy code

- refactoring functions

Example

Prompt:

Write a unit test for this Go function using testify

Output:

func TestCalculateTotal(t *testing.T) {

result := CalculateTotal(5, 10)

assert.Equal(t, 15, result)

}

Developer Workflow

graph TD

A[Developer Prompt] --> B[IDE Plugin]

B --> C[LLM API]

C --> D[Generated Code]

D --> E[Developer Review]

5. Automated Document Processing

Many industries deal with large volumes of documents.

Examples include:

- legal contracts

- insurance claims

- invoices

- medical records

LLMs can convert unstructured text into structured data.

Example

Input document:

Invoice #34821

Customer: ACME Corp

Amount: $1250

Due Date: June 30

LLM Output:

{

"invoice_number": "34821",

"customer": "ACME Corp",

"amount": 1250,

"due_date": "2025-06-30"

}

Production Workflow

graph TD

A[Document Upload] --> B[OCR]

B --> C[Text Extraction]

C --> D[LLM Parsing]

D --> E[Structured Database]

Industries using this heavily:

- finance

- insurance

- logistics

6. Sentiment Analysis for Product Feedback

Companies receive huge volumes of reviews and feedback.

LLMs help classify them into categories such as:

- positive feedback

- complaints

- feature requests

- bug reports

Example

Review:

The food was amazing and the staff were very friendly.

Classification:

Sentiment: Positive

Category: Service Quality

A simplified probability model can be described as:

Where the model predicts the most likely sentiment given the input text.

7. AI-Assisted Monitoring and Incident Analysis

Some engineering teams use LLMs to summarize system incidents and logs.

Example log output summarized by an LLM:

Root cause: Database connection pool exhaustion

Impact: API latency increased

Affected service: Payment API

Suggested fix: Increase pool size and monitor connection leaks

Incident Analysis Pipeline

graph TD

A[System Logs] --> B[Log Aggregator]

B --> C[LLM Analysis]

C --> D[Incident Summary]

D --> E[Engineering Dashboard]

Production Challenges When Using LLMs

Using LLMs in production introduces new engineering challenges.

Hallucinations

Models sometimes generate incorrect information.

Mitigation strategies:

- Retrieval systems

- Output validation

- Guardrails

Cost Control

LLM API calls can become expensive at scale.

Common optimizations:

- caching responses

- batching requests

- using smaller models

Latency

LLM responses can be slower than traditional APIs.

Engineering solutions include:

- streaming responses

- asynchronous pipelines

- local model deployment

Final Thoughts

LLMs are not just chatbots.

They are becoming general-purpose tools for automating text-heavy workflows.

The most successful applications focus on tasks where:

- large volumes of text must be processed

- humans currently perform repetitive analysis

- results can be validated programmatically

We are still in the early stages of this transformation, and many new production patterns are emerging.